AI Ethics for Educators:

Navigating Tools Responsibly

Artificial Intelligence (AI) is rapidly transforming education, offering innovative tools to enhance teaching and learning. But integrating AI also brings crucial ethical considerations and practical challenges. This guide provides educators with essential insights into AI ethics, responsible AI tool usage, and key factors to consider for effective and equitable implementation.

Understanding AI Ethics in Education

Ethical AI in education prioritizes fairness, transparency, and the well-being of students and educators. As AI tools become more common, it’s vital to:

- Address Bias: AI algorithms can amplify existing biases if trained on skewed data. This can lead to unfair outcomes in grading, admissions, or learning. Always critically evaluate AI outputs for bias and advocate for tools designed with diversity and inclusion in mind.

- Prioritize Privacy and Data Security: AI systems often collect vast amounts of student data, from personal information to academic records. This poses significant privacy risks. Institutions and educators must ensure informed consent, adhere to data protection protocols, and understand how user data is collected, stored, and shared. Never upload or share student information covered by privacy regulations like FERPA.

- Ensure Transparency: The “black box” problem of AI means the rationale behind its outputs can be unclear. AI tools in education must be transparent, allowing educators and students to understand how information is processed and decisions are made.

- Uphold Academic Integrity: AI’s ability to generate content easily raises concerns about cheating and plagiarism. Establish clear guidelines for AI use, discuss ethical AI practices with students, and help them differentiate between AI assistance and academic dishonesty.

Who Should Use AI Tools (and Why)

AI tools can be powerful allies for educators when used thoughtfully and strategically.

- Teachers Seeking Efficiency: AI can automate tasks like grading, tracking progress, generating lesson plans, creating quizzes, and managing schedules. This frees up valuable time for direct student interaction and personalized instruction.

- Educators Aiming for Personalized Learning: AI-powered tools can analyze student performance to provide tailored experiences. They adapt to individual paces and styles, offer customized feedback, and recommend resources, catering to diverse needs and improving comprehension.

- Instructors Developing Engaging Content: Generative AI can help create a range of educational resources, from lesson materials and activities to presentations. Some tools even generate interactive lessons.

- Teachers Desiring Data-Driven Insights: AI solutions can analyze large datasets to identify student performance trends, predict academic challenges, and provide insights for teaching strategies and early interventions.

- Educators Fostering New Learning Approaches: AI can facilitate interactive learning environments, including simulations, and support language acquisition with real-time feedback.

When NOT to Use AI Tools (and Why)

While AI offers many benefits, there are critical scenarios where its use by educators or students can be detrimental. Understanding these limits is key to responsible integration.

- When developing core cognitive skills:When the goal is to foster critical thinking, problem-solving, creativity, or independent learning, avoid over-reliance on AI. Allowing AI to complete complex tasks entirely can hinder intellectual growth and prevent students from developing essential skills that require effort and struggle.

- When genuine human connection is paramount:AI cannot replicate the social and emotional intelligence, empathy, motivation, or inspiration that human teachers provide. Do not let AI replace valuable teacher-to-student and peer-to-peer interactions, which are crucial for holistic development and building a supportive learning community.

- When factual accuracy is non-negotiable:Avoid solely relying on AI-generated content when factual integrity is critical. AI models can “hallucinate” (generate false information) or produce inaccurate and biased outputs. Always verify information from AI tools with reliable, human-vetted sources, especially for research or assessment.

- When facing or exacerbating digital inequity:Exercise caution when AI tools might deepen existing digital divides. If access to advanced AI tools is limited due to socioeconomic factors, over-reliance on them can unfairly disadvantage students without those resources, perpetuating educational inequities.

- When understanding the tool’s ethical implications is lacking:Never use AI tools without first understanding their ethical implications. This includes being unaware of data privacy risks, potential biases embedded in the AI, and the environmental impact of large AI models. Responsible use begins with informed use.

- When the intent is academic dishonesty:Do NOT use AI for purposes that misrepresent a student’s own work or circumvent the learning process for an easy answer. This includes generating essays, solving problems without understanding, or plagiarizing content. Such uses undermine academic integrity and the very purpose of education.

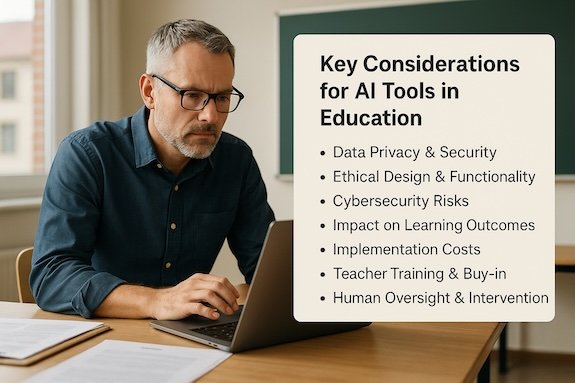

Key Considerations for AI Tools in Education

When considering or implementing AI tools, educators should be vigilant about several factors:

- Data Privacy & Security:

- Scrutinize Data Practices: Always examine how tools collect, store, and share data.

- Ensure Compliance: Verify adherence to privacy laws (e.g., FERPA).

- Protect Student Data: Confirm robust protections are in place.

- Ethical Design & Functionality:

- Investigate how the AI tool addresses bias, ensures transparency, and maintains human oversight.

- Tools should augment, not replace, human expertise and judgment.

- Cybersecurity Risks:

- AI adoption expands the attack surface for cyber threats.

- Ensure robust cybersecurity protocols are in place to protect against data breaches.

- Impact on Learning Outcomes:

- Continuously assess whether the AI tool genuinely enhances learning, critical thinking, and skill development.

- Confirm it doesn’t inadvertently promote dependency or diminish the learning process.

- Implementation Costs:

- Be aware of the financial implications, including initial setup, ongoing subscriptions, and potential infrastructure upgrades.

- Teacher Training & Buy-in:

- Successful AI integration requires adequate professional development for educators to understand how to effectively and ethically use these tools.

- Human Oversight & Intervention:

- AI should serve in a consultative role, augmenting but never fully replacing the responsibilities of educators.

- There must always be a “human in the loop” to intervene, evaluate, and approve AI-driven decisions or outputs.

- Avoiding “App Overload”:

- A streamlined approach to AI tool adoption is recommended to prevent overwhelming educators and ensure coherent integration within the curriculum.

By carefully considering these ethical dimensions and practical implications, you can harness the power of AI to create more personalized, efficient, and engaging learning experiences while upholding the core values of education.

What’s Inside

the Checklist:

- Pre-use safety checks (privacy, transparency, bias)

- Ethical guidelines for student use

- Post-use reflection prompts

- Red flags to avoid

Empower your AI decisions—get the clarity you need.